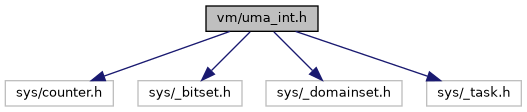

#include <sys/counter.h>#include <sys/_bitset.h>#include <sys/_domainset.h>#include <sys/_task.h>

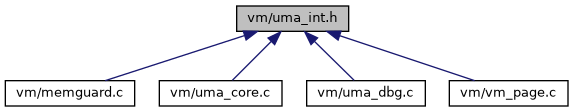

Go to the source code of this file.

Data Structures | |

| struct | uma_hash |

| struct | uma_bucket |

| struct | uma_cache_bucket |

| struct | uma_cache |

| struct | uma_domain |

| struct | uma_keg |

| struct | uma_slab |

| struct | uma_hash_slab |

| struct | uma_zone_domain |

| struct | uma_zone |

Macros | |

| #define | UMA_SLAB_SIZE PAGE_SIZE /* How big are our slabs? */ |

| #define | UMA_SLAB_MASK (PAGE_SIZE - 1) /* Mask to get back to the page */ |

| #define | UMA_SLAB_SHIFT PAGE_SHIFT /* Number of bits PAGE_MASK */ |

| #define | UMA_MAX_WASTE 10 |

| #define | UMA_CACHESPREAD_MAX_SIZE (128 * 1024) |

| #define | UMA_ZFLAG_OFFPAGE |

| #define | UMA_ZFLAG_HASH |

| #define | UMA_ZFLAG_VTOSLAB |

| #define | UMA_ZFLAG_CTORDTOR 0x01000000 /* Zone has ctor/dtor set. */ |

| #define | UMA_ZFLAG_LIMIT 0x02000000 /* Zone has limit set. */ |

| #define | UMA_ZFLAG_CACHE 0x04000000 /* uma_zcache_create()d it */ |

| #define | UMA_ZFLAG_BUCKET 0x10000000 /* Bucket zone. */ |

| #define | UMA_ZFLAG_INTERNAL 0x20000000 /* No offpage no PCPU. */ |

| #define | UMA_ZFLAG_TRASH 0x40000000 /* Add trash ctor/dtor. */ |

| #define | UMA_ZFLAG_INHERIT |

| #define | PRINT_UMA_ZFLAGS |

| #define | UMA_HASH_SIZE_INIT 32 |

| #define | UMA_HASH(h, s) ((((uintptr_t)s) >> UMA_SLAB_SHIFT) & (h)->uh_hashmask) |

| #define | UMA_HASH_INSERT(h, s, mem) |

| #define | UMA_HASH_REMOVE(h, s) LIST_REMOVE(slab_tohashslab(s), uhs_hlink) |

| #define | UMA_SUPER_ALIGN CACHE_LINE_SIZE |

| #define | UMA_ALIGN __aligned(UMA_SUPER_ALIGN) |

| #define | SLAB_MAX_SETSIZE (PAGE_SIZE / UMA_SMALLEST_UNIT) |

| #define | SLAB_MIN_SETSIZE _BITSET_BITS |

| #define | UZ_ITEMS_SLEEPER_SHIFT 44LL |

| #define | UZ_ITEMS_SLEEPERS_MAX ((1 << (64 - UZ_ITEMS_SLEEPER_SHIFT)) - 1) |

| #define | UZ_ITEMS_COUNT_MASK ((1LL << UZ_ITEMS_SLEEPER_SHIFT) - 1) |

| #define | UZ_ITEMS_COUNT(x) ((x) & UZ_ITEMS_COUNT_MASK) |

| #define | UZ_ITEMS_SLEEPERS(x) ((x) >> UZ_ITEMS_SLEEPER_SHIFT) |

| #define | UZ_ITEMS_SLEEPER (1LL << UZ_ITEMS_SLEEPER_SHIFT) |

| #define | ZONE_ASSERT_COLD(z) |

| #define | ZDOM_GET(z, n) (&((uma_zone_domain_t)&(z)->uz_cpu[mp_maxid + 1])[n]) |

| #define | KEG_LOCKPTR(k, d) (struct mtx *)&(k)->uk_domain[(d)].ud_lock |

| #define | KEG_LOCK_INIT(k, d, lc) |

| #define | KEG_LOCK_FINI(k, d) mtx_destroy(KEG_LOCKPTR(k, d)) |

| #define | KEG_LOCK(k, d) ({ mtx_lock(KEG_LOCKPTR(k, d)); KEG_LOCKPTR(k, d); }) |

| #define | KEG_UNLOCK(k, d) mtx_unlock(KEG_LOCKPTR(k, d)) |

| #define | KEG_LOCK_ASSERT(k, d) mtx_assert(KEG_LOCKPTR(k, d), MA_OWNED) |

| #define | KEG_GET(zone, keg) |

| #define | KEG_ASSERT_COLD(k) |

| #define | ZDOM_LOCK_INIT(z, zdom, lc) |

| #define | ZDOM_LOCK_FINI(z) mtx_destroy(&(z)->uzd_lock) |

| #define | ZDOM_LOCK_ASSERT(z) mtx_assert(&(z)->uzd_lock, MA_OWNED) |

| #define | ZDOM_LOCK(z) mtx_lock(&(z)->uzd_lock) |

| #define | ZDOM_OWNED(z) (mtx_owner(&(z)->uzd_lock) != NULL) |

| #define | ZDOM_UNLOCK(z) mtx_unlock(&(z)->uzd_lock) |

| #define | ZONE_LOCK(z) ZDOM_LOCK(ZDOM_GET((z), 0)) |

| #define | ZONE_UNLOCK(z) ZDOM_UNLOCK(ZDOM_GET((z), 0)) |

| #define | ZONE_LOCKPTR(z) (&ZDOM_GET((z), 0)->uzd_lock) |

| #define | ZONE_CROSS_LOCK_INIT(z) mtx_init(&(z)->uz_cross_lock, "UMA Cross", NULL, MTX_DEF) |

| #define | ZONE_CROSS_LOCK(z) mtx_lock(&(z)->uz_cross_lock) |

| #define | ZONE_CROSS_UNLOCK(z) mtx_unlock(&(z)->uz_cross_lock) |

| #define | ZONE_CROSS_LOCK_FINI(z) mtx_destroy(&(z)->uz_cross_lock) |

Typedefs | |

| typedef struct uma_bucket * | uma_bucket_t |

| typedef struct uma_cache_bucket * | uma_cache_bucket_t |

| typedef struct uma_cache * | uma_cache_t |

| typedef struct uma_domain * | uma_domain_t |

| typedef struct uma_keg * | uma_keg_t |

| typedef struct uma_slab * | uma_slab_t |

| typedef struct uma_hash_slab * | uma_hash_slab_t |

| typedef struct uma_zone_domain * | uma_zone_domain_t |

Functions | |

| LIST_HEAD (slabhashhead, uma_hash_slab) | |

| LIST_HEAD (slabhead, uma_slab) | |

| static void | cache_set_uz_flags (uma_cache_t cache, uint32_t flags) |

| static void | cache_set_uz_size (uma_cache_t cache, uint32_t size) |

| static uint32_t | cache_uz_flags (uma_cache_t cache) |

| static uint32_t | cache_uz_size (uma_cache_t cache) |

| struct uma_domain | __aligned (CACHE_LINE_SIZE) |

| BITSET_DEFINE (noslabbits, 0) | |

| _Static_assert (sizeof(struct uma_slab)==__offsetof(struct uma_slab, us_free), "us_free field must be last") | |

| _Static_assert (MAXMEMDOM< 255, "us_domain field is not wide enough") | |

| static uma_hash_slab_t | slab_tohashslab (uma_slab_t slab) |

| static void * | slab_data (uma_slab_t slab, uma_keg_t keg) |

| static void * | slab_item (uma_slab_t slab, uma_keg_t keg, int index) |

| static int | slab_item_index (uma_slab_t slab, uma_keg_t keg, void *item) |

| STAILQ_HEAD (uma_bucketlist, uma_bucket) | |

| static __inline uma_slab_t | hash_sfind (struct uma_hash *hash, uint8_t *data) |

| static __inline uma_slab_t | vtoslab (vm_offset_t va) |

| static __inline void | vtozoneslab (vm_offset_t va, uma_zone_t *zone, uma_slab_t *slab) |

| static __inline void | vsetzoneslab (vm_offset_t va, uma_zone_t zone, uma_slab_t slab) |

| static void | uma_total_dec (unsigned long size) |

| static void | uma_total_inc (unsigned long size) |

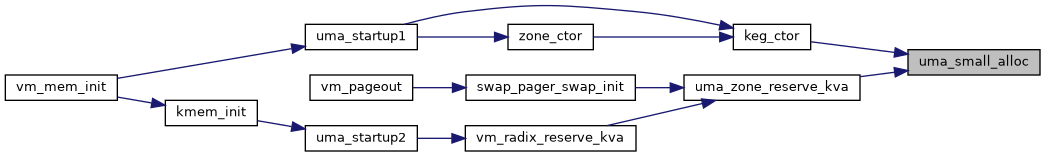

| void * | uma_small_alloc (uma_zone_t zone, vm_size_t bytes, int domain, uint8_t *pflag, int wait) |

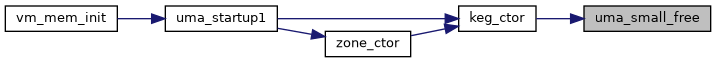

| void | uma_small_free (void *mem, vm_size_t size, uint8_t flags) |

| void | uma_set_limit (unsigned long limit) |

Variables | |

| struct uma_cache | UMA_ALIGN |

| struct mtx_padalign | ud_lock |

| struct slabhead | ud_part_slab |

| struct slabhead | ud_free_slab |

| struct slabhead | ud_full_slab |

| uint32_t | ud_pages |

| uint32_t | ud_free_items |

| uint32_t | ud_free_slabs |

| struct uma_keg | __aligned |

| struct uma_bucketlist | uzd_buckets |

| uma_bucket_t | uzd_cross |

| long | uzd_nitems |

| long | uzd_imax |

| long | uzd_imin |

| long | uzd_bimin |

| long | uzd_wss |

| long | uzd_limin |

| u_int | uzd_timin |

| smr_seq_t | uzd_seq |

| struct mtx | uzd_lock |

| unsigned long | uma_kmem_limit |

| unsigned long | uma_kmem_total |

Macro Definition Documentation

◆ KEG_ASSERT_COLD

| #define KEG_ASSERT_COLD | ( | k | ) |

◆ KEG_GET

| #define KEG_GET | ( | zone, | |

| keg | |||

| ) |

◆ KEG_LOCK

| #define KEG_LOCK | ( | k, | |

| d | |||

| ) | ({ mtx_lock(KEG_LOCKPTR(k, d)); KEG_LOCKPTR(k, d); }) |

◆ KEG_LOCK_ASSERT

| #define KEG_LOCK_ASSERT | ( | k, | |

| d | |||

| ) | mtx_assert(KEG_LOCKPTR(k, d), MA_OWNED) |

◆ KEG_LOCK_FINI

| #define KEG_LOCK_FINI | ( | k, | |

| d | |||

| ) | mtx_destroy(KEG_LOCKPTR(k, d)) |

◆ KEG_LOCK_INIT

| #define KEG_LOCK_INIT | ( | k, | |

| d, | |||

| lc | |||

| ) |

◆ KEG_LOCKPTR

| #define KEG_LOCKPTR | ( | k, | |

| d | |||

| ) | (struct mtx *)&(k)->uk_domain[(d)].ud_lock |

◆ KEG_UNLOCK

| #define KEG_UNLOCK | ( | k, | |

| d | |||

| ) | mtx_unlock(KEG_LOCKPTR(k, d)) |

◆ PRINT_UMA_ZFLAGS

| #define PRINT_UMA_ZFLAGS |

◆ SLAB_MAX_SETSIZE

| #define SLAB_MAX_SETSIZE (PAGE_SIZE / UMA_SMALLEST_UNIT) |

◆ SLAB_MIN_SETSIZE

◆ UMA_ALIGN

| #define UMA_ALIGN __aligned(UMA_SUPER_ALIGN) |

◆ UMA_CACHESPREAD_MAX_SIZE

◆ UMA_HASH

| #define UMA_HASH | ( | h, | |

| s | |||

| ) | ((((uintptr_t)s) >> UMA_SLAB_SHIFT) & (h)->uh_hashmask) |

◆ UMA_HASH_INSERT

| #define UMA_HASH_INSERT | ( | h, | |

| s, | |||

| mem | |||

| ) |

◆ UMA_HASH_REMOVE

| #define UMA_HASH_REMOVE | ( | h, | |

| s | |||

| ) | LIST_REMOVE(slab_tohashslab(s), uhs_hlink) |

◆ UMA_HASH_SIZE_INIT

◆ UMA_MAX_WASTE

◆ UMA_SLAB_MASK

| #define UMA_SLAB_MASK (PAGE_SIZE - 1) /* Mask to get back to the page */ |

◆ UMA_SLAB_SHIFT

| #define UMA_SLAB_SHIFT PAGE_SHIFT /* Number of bits PAGE_MASK */ |

◆ UMA_SLAB_SIZE

| #define UMA_SLAB_SIZE PAGE_SIZE /* How big are our slabs? */ |

◆ UMA_SUPER_ALIGN

◆ UMA_ZFLAG_BUCKET

◆ UMA_ZFLAG_CACHE

| #define UMA_ZFLAG_CACHE 0x04000000 /* uma_zcache_create()d it */ |

◆ UMA_ZFLAG_CTORDTOR

| #define UMA_ZFLAG_CTORDTOR 0x01000000 /* Zone has ctor/dtor set. */ |

◆ UMA_ZFLAG_HASH

| #define UMA_ZFLAG_HASH |

◆ UMA_ZFLAG_INHERIT

| #define UMA_ZFLAG_INHERIT |

◆ UMA_ZFLAG_INTERNAL

| #define UMA_ZFLAG_INTERNAL 0x20000000 /* No offpage no PCPU. */ |

◆ UMA_ZFLAG_LIMIT

| #define UMA_ZFLAG_LIMIT 0x02000000 /* Zone has limit set. */ |

◆ UMA_ZFLAG_OFFPAGE

| #define UMA_ZFLAG_OFFPAGE |

◆ UMA_ZFLAG_TRASH

| #define UMA_ZFLAG_TRASH 0x40000000 /* Add trash ctor/dtor. */ |

◆ UMA_ZFLAG_VTOSLAB

| #define UMA_ZFLAG_VTOSLAB |

◆ UZ_ITEMS_COUNT

| #define UZ_ITEMS_COUNT | ( | x | ) | ((x) & UZ_ITEMS_COUNT_MASK) |

◆ UZ_ITEMS_COUNT_MASK

| #define UZ_ITEMS_COUNT_MASK ((1LL << UZ_ITEMS_SLEEPER_SHIFT) - 1) |

◆ UZ_ITEMS_SLEEPER

| #define UZ_ITEMS_SLEEPER (1LL << UZ_ITEMS_SLEEPER_SHIFT) |

◆ UZ_ITEMS_SLEEPER_SHIFT

◆ UZ_ITEMS_SLEEPERS

| #define UZ_ITEMS_SLEEPERS | ( | x | ) | ((x) >> UZ_ITEMS_SLEEPER_SHIFT) |

◆ UZ_ITEMS_SLEEPERS_MAX

| #define UZ_ITEMS_SLEEPERS_MAX ((1 << (64 - UZ_ITEMS_SLEEPER_SHIFT)) - 1) |

◆ ZDOM_GET

| #define ZDOM_GET | ( | z, | |

| n | |||

| ) | (&((uma_zone_domain_t)&(z)->uz_cpu[mp_maxid + 1])[n]) |

◆ ZDOM_LOCK

◆ ZDOM_LOCK_ASSERT

| #define ZDOM_LOCK_ASSERT | ( | z | ) | mtx_assert(&(z)->uzd_lock, MA_OWNED) |

◆ ZDOM_LOCK_FINI

◆ ZDOM_LOCK_INIT

| #define ZDOM_LOCK_INIT | ( | z, | |

| zdom, | |||

| lc | |||

| ) |

◆ ZDOM_OWNED

| #define ZDOM_OWNED | ( | z | ) | (mtx_owner(&(z)->uzd_lock) != NULL) |

◆ ZDOM_UNLOCK

◆ ZONE_ASSERT_COLD

| #define ZONE_ASSERT_COLD | ( | z | ) |

◆ ZONE_CROSS_LOCK

| #define ZONE_CROSS_LOCK | ( | z | ) | mtx_lock(&(z)->uz_cross_lock) |

◆ ZONE_CROSS_LOCK_FINI

| #define ZONE_CROSS_LOCK_FINI | ( | z | ) | mtx_destroy(&(z)->uz_cross_lock) |

◆ ZONE_CROSS_LOCK_INIT

| #define ZONE_CROSS_LOCK_INIT | ( | z | ) | mtx_init(&(z)->uz_cross_lock, "UMA Cross", NULL, MTX_DEF) |

◆ ZONE_CROSS_UNLOCK

| #define ZONE_CROSS_UNLOCK | ( | z | ) | mtx_unlock(&(z)->uz_cross_lock) |

◆ ZONE_LOCK

◆ ZONE_LOCKPTR

◆ ZONE_UNLOCK

| #define ZONE_UNLOCK | ( | z | ) | ZDOM_UNLOCK(ZDOM_GET((z), 0)) |

Typedef Documentation

◆ uma_bucket_t

| typedef struct uma_bucket* uma_bucket_t |

◆ uma_cache_bucket_t

| typedef struct uma_cache_bucket* uma_cache_bucket_t |

◆ uma_cache_t

| typedef struct uma_cache* uma_cache_t |

◆ uma_domain_t

| typedef struct uma_domain* uma_domain_t |

◆ uma_hash_slab_t

| typedef struct uma_hash_slab* uma_hash_slab_t |

◆ uma_keg_t

◆ uma_slab_t

| typedef struct uma_slab* uma_slab_t |

◆ uma_zone_domain_t

| typedef struct uma_zone_domain* uma_zone_domain_t |

Function Documentation

◆ __aligned()

| struct uma_domain __aligned | ( | CACHE_LINE_SIZE | ) |

◆ _Static_assert() [1/2]

| _Static_assert | ( | ) |

◆ _Static_assert() [2/2]

| _Static_assert | ( | sizeof(struct uma_slab) | = =__offsetof(struct uma_slab, us_free), |

| "us_free field must be last" | |||

| ) |

◆ BITSET_DEFINE()

| BITSET_DEFINE | ( | noslabbits | , |

| 0 | |||

| ) |

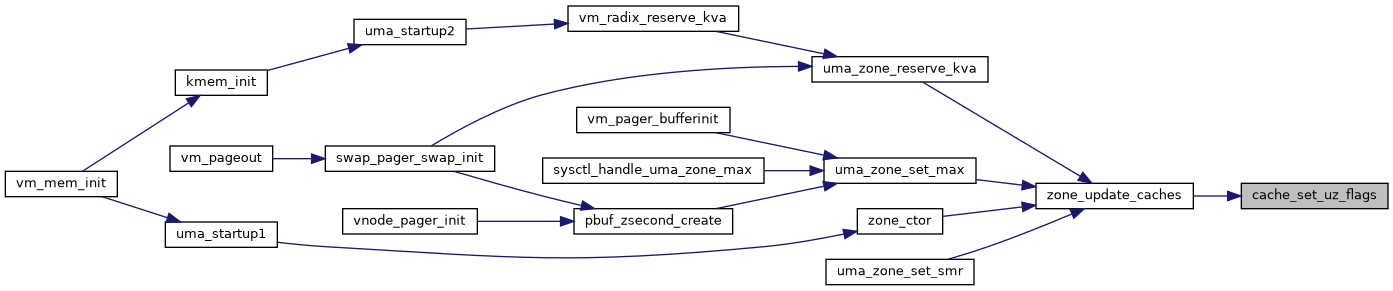

◆ cache_set_uz_flags()

|

inlinestatic |

Definition at line 277 of file uma_int.h.

Referenced by zone_update_caches().

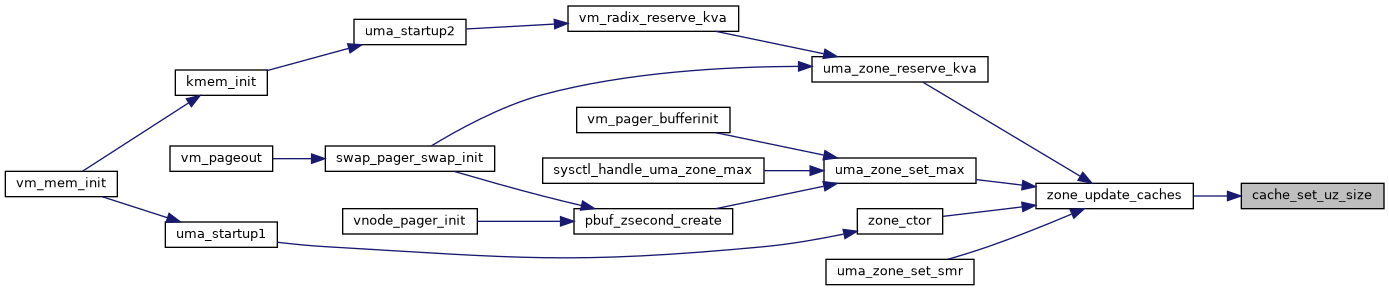

◆ cache_set_uz_size()

|

inlinestatic |

Definition at line 284 of file uma_int.h.

Referenced by zone_update_caches().

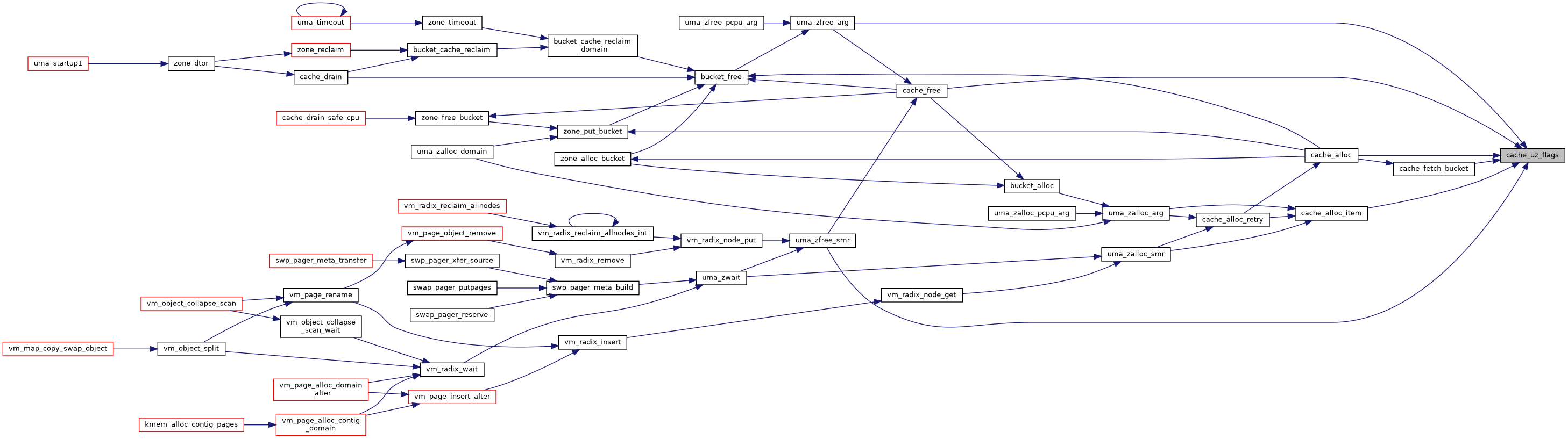

◆ cache_uz_flags()

|

inlinestatic |

Definition at line 291 of file uma_int.h.

Referenced by cache_alloc(), cache_alloc_item(), cache_fetch_bucket(), cache_free(), uma_zfree_arg(), and uma_zfree_smr().

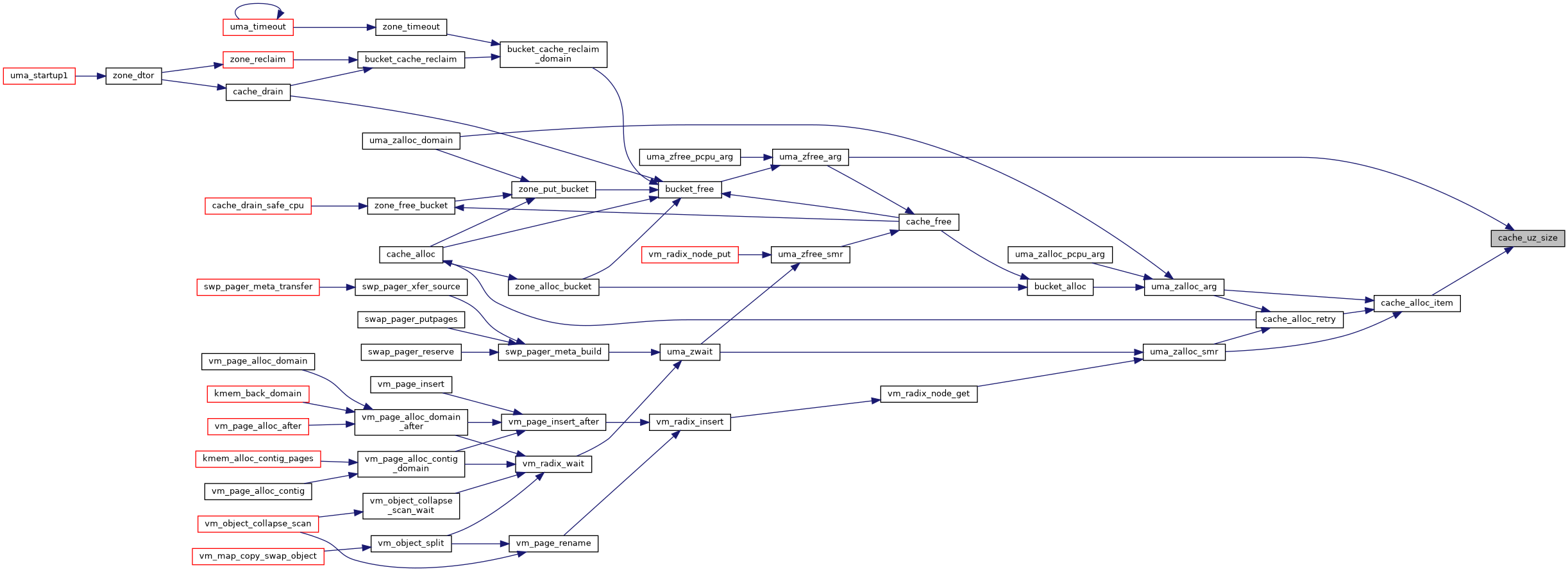

◆ cache_uz_size()

|

inlinestatic |

Definition at line 298 of file uma_int.h.

Referenced by cache_alloc_item(), and uma_zfree_arg().

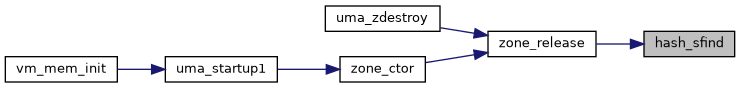

◆ hash_sfind()

|

static |

Definition at line 595 of file uma_int.h.

Referenced by zone_release().

◆ LIST_HEAD() [1/2]

| LIST_HEAD | ( | slabhashhead | , |

| uma_hash_slab | |||

| ) |

◆ LIST_HEAD() [2/2]

| LIST_HEAD | ( | slabhead | , |

| uma_slab | |||

| ) |

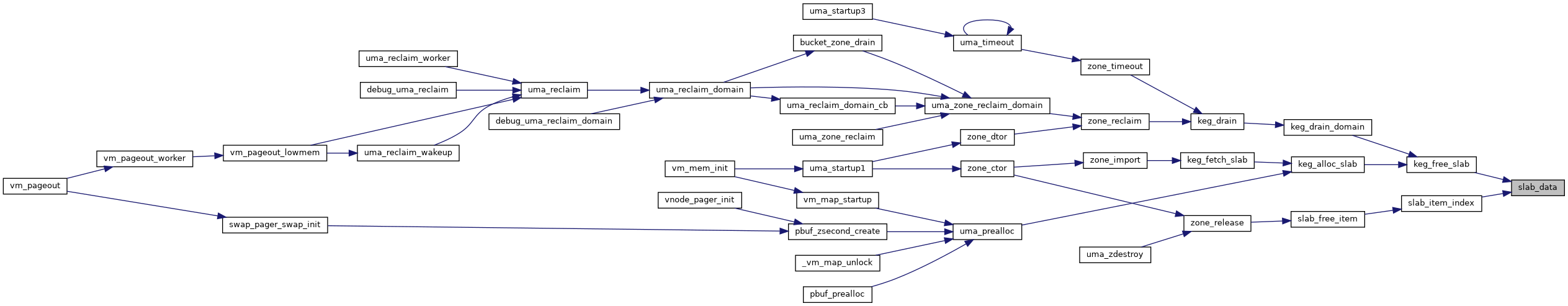

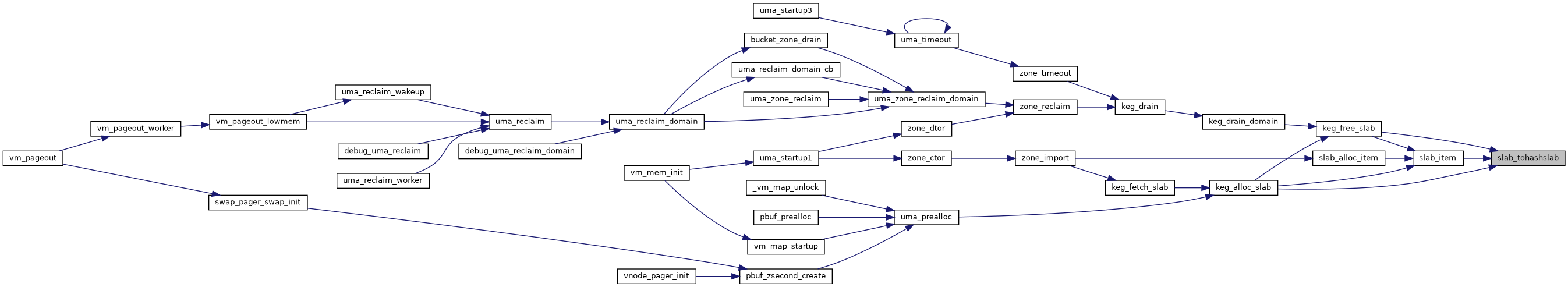

◆ slab_data()

|

inlinestatic |

Definition at line 402 of file uma_int.h.

References uma_hash_slab::uhs_slab.

Referenced by keg_free_slab(), and slab_item_index().

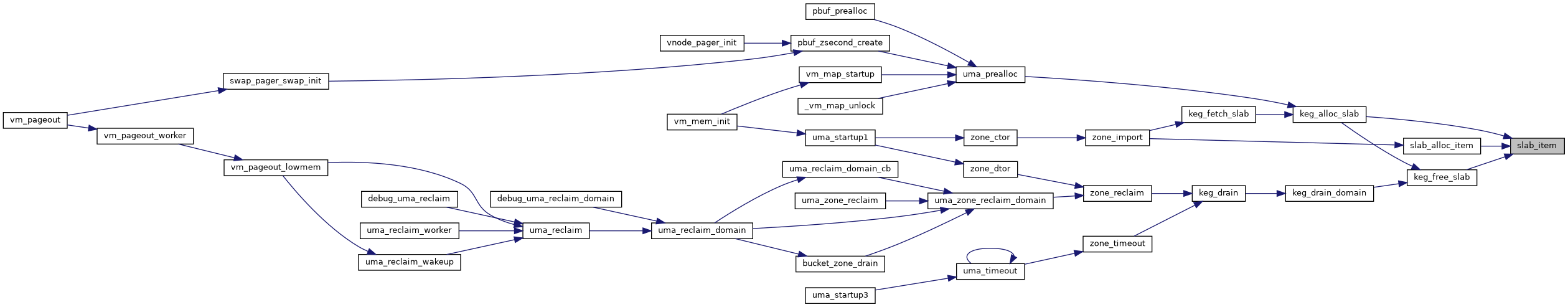

◆ slab_item()

|

inlinestatic |

Definition at line 412 of file uma_int.h.

References slab_tohashslab(), uma_hash_slab::uhs_data, uma_keg::uk_flags, uma_keg::uk_pgoff, and UMA_ZFLAG_OFFPAGE.

Referenced by keg_alloc_slab(), keg_free_slab(), and slab_alloc_item().

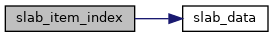

◆ slab_item_index()

|

inlinestatic |

Definition at line 421 of file uma_int.h.

References slab_data(), and uma_keg::uk_rsize.

Referenced by slab_free_item().

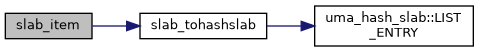

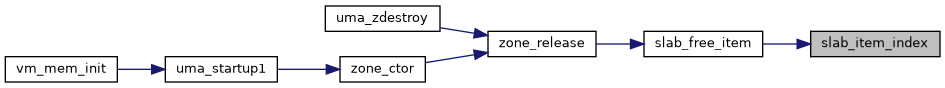

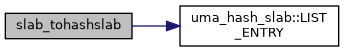

◆ slab_tohashslab()

|

inlinestatic |

Definition at line 395 of file uma_int.h.

References uma_hash_slab::LIST_ENTRY(), uma_hash_slab::uhs_data, and uma_hash_slab::uhs_slab.

Referenced by keg_alloc_slab(), keg_free_slab(), and slab_item().

◆ STAILQ_HEAD()

| STAILQ_HEAD | ( | uma_bucketlist | , |

| uma_bucket | |||

| ) |

◆ uma_set_limit()

| void uma_set_limit | ( | unsigned long | limit | ) |

Definition at line 5318 of file uma_core.c.

References uma_kmem_limit.

◆ uma_small_alloc()

| void * uma_small_alloc | ( | uma_zone_t | zone, |

| vm_size_t | bytes, | ||

| int | domain, | ||

| uint8_t * | pflag, | ||

| int | wait | ||

| ) |

◆ uma_small_free()

| void uma_small_free | ( | void * | mem, |

| vm_size_t | size, | ||

| uint8_t | flags | ||

| ) |

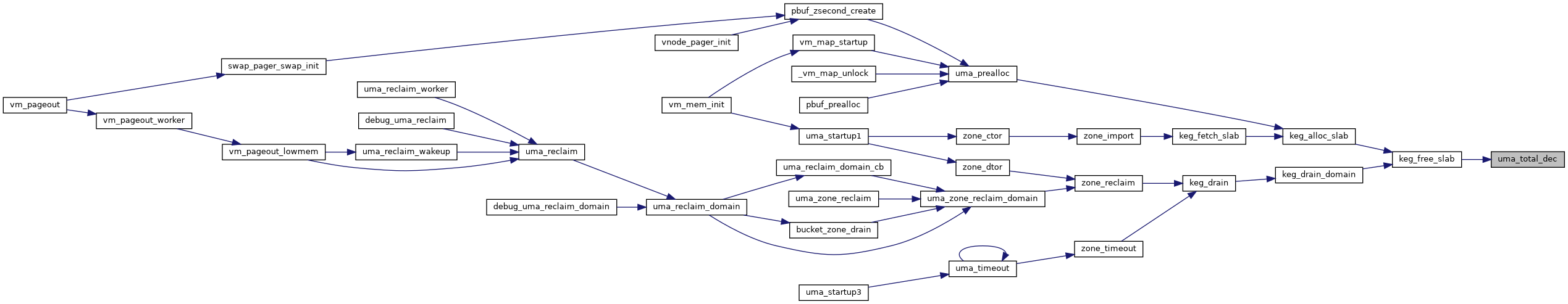

◆ uma_total_dec()

|

inlinestatic |

Definition at line 643 of file uma_int.h.

Referenced by keg_free_slab().

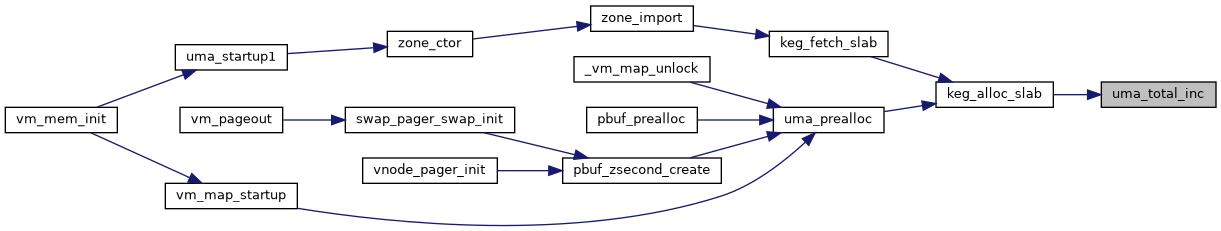

◆ uma_total_inc()

|

inlinestatic |

Definition at line 650 of file uma_int.h.

References uma_kmem_total.

Referenced by keg_alloc_slab().

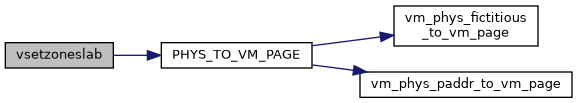

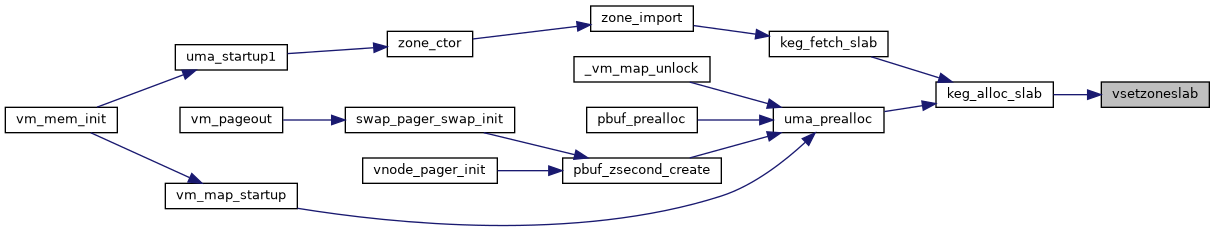

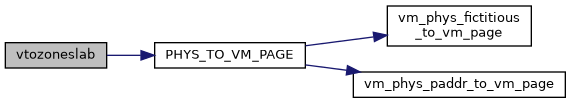

◆ vsetzoneslab()

|

static |

Definition at line 629 of file uma_int.h.

References PHYS_TO_VM_PAGE().

Referenced by keg_alloc_slab().

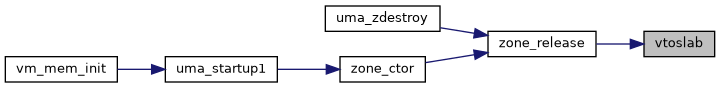

◆ vtoslab()

|

static |

Definition at line 610 of file uma_int.h.

Referenced by zone_release().

◆ vtozoneslab()

|

static |

Definition at line 619 of file uma_int.h.

References PHYS_TO_VM_PAGE().

Variable Documentation

◆ __aligned

| u_int __aligned |

◆ ud_free_items

◆ ud_free_slab

◆ ud_free_slabs

◆ ud_full_slab

◆ ud_lock

◆ ud_pages

◆ ud_part_slab

◆ UMA_ALIGN

| struct uma_cache UMA_ALIGN |

◆ uma_kmem_limit

|

extern |

Referenced by uma_avail(), uma_limit(), and uma_set_limit().

◆ uma_kmem_total

|

extern |

Referenced by uma_size(), and uma_total_inc().

◆ uzd_bimin

◆ uzd_buckets

◆ uzd_cross

| uma_bucket_t uzd_cross |

◆ uzd_imax

| long uzd_imax |

Definition at line 3 of file uma_int.h.

Referenced by cache_alloc().

◆ uzd_imin

◆ uzd_limin

◆ uzd_lock

◆ uzd_nitems

| long uzd_nitems |

Definition at line 2 of file uma_int.h.

Referenced by zone_domain_highest().